I’ve been working with Nesta to put a new intranet in place. It’s been a smooth project, delivered on time and ticking all the requirements boxes.

But I’ve written enough blog posts showing how organisations are saving money by deploying GovIntranet. This time, I thought I’d share some of the aspects that we at Agento have learned running a project as a small business.

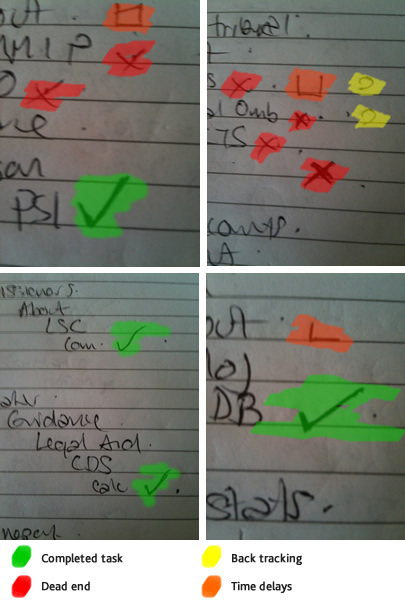

Where I work, we don’t have usability labs, eye tracking equipment or even webcams or screen capturing software to test information architecture designs with people. Resorting to budget user testing techniques can still provide valuable insights which in turn create recommendations for improvements.

Where I work, we don’t have usability labs, eye tracking equipment or even webcams or screen capturing software to test information architecture designs with people. Resorting to budget user testing techniques can still provide valuable insights which in turn create recommendations for improvements.